Developments in the self-driving car world can sometimes be a bit dry: a million miles without an accident, a 10 percent increase in pedestrian detection range, and so on. But this research has both an interesting idea behind it and a surprisingly hands-on method of testing: pitting the vehicle against a real racing driver on a course.

To set expectations here, this isn’t some stunt, it’s actually warranted given the nature of the research, and it’s not like they were trading positions, jockeying for entry lines, and generally rubbing bumpers. They went separately, and the researcher, whom I contacted, politely declined to provide the actual lap times. This is science, people. Please!

The question which Nathan Spielberg and his colleagues at Stanford were interested in answering has to do with an autonomous vehicle operating under extreme conditions. The simple fact is that a huge proportion of the miles driven by these systems are at normal speeds, in good conditions. And most obstacle encounters are similarly ordinary.

If the worst should happen and a car needs to exceed these ordinary bounds of handling — specifically friction limits — can it be trusted to do so? And how would you build an AI agent that can do so?

The researchers’ paper, published today in the journal Science Robotics, begins with the assumption that a physics-based model just isn’t adequate for the job. These are computer models that simulate the car’s motion in terms of weight, speed, road surface, and other conditions. But they are necessarily simplified and their assumptions are of the type to produce increasingly inaccurate results as values exceed ordinary limits.

Imagine if such a simulator simplified each wheel to a point or line when during a slide it is highly important which side of the tire is experiencing the most friction. Such detailed simulations are beyond the ability of current hardware to do quickly or accurately enough. But the results of such simulations can be summarized into an input and output, and that data can be fed into a neural network — one that turns out to be remarkably good at taking turns.

The simulation provides the basics of how a car of this make and weight should move when it is going at speed X and needs to turn at angle Y — obviously it’s more complicated than that, but you get the idea. It’s fairly basic. The model then consults its training, but is also informed by the real-world results, which may perhaps differ from theory.

So the car goes into a turn knowing that, theoretically, it should have to move the wheel this much to the left, then this much more at this point, and so on. But the sensors in the car report that despite this, the car is drifting a bit off the intended line — and this input is taken into account, causing the agent to turn the wheel a bit more, or less, or whatever the case may be.

And where does the racing driver come into it, you ask? Well, the researchers needed to compare the car’s performance with a human driver who knows from experience how to control a car at its friction limits, and that’s pretty much the definition of a racer. If your tires aren’t hot, you’re probably going too slow.

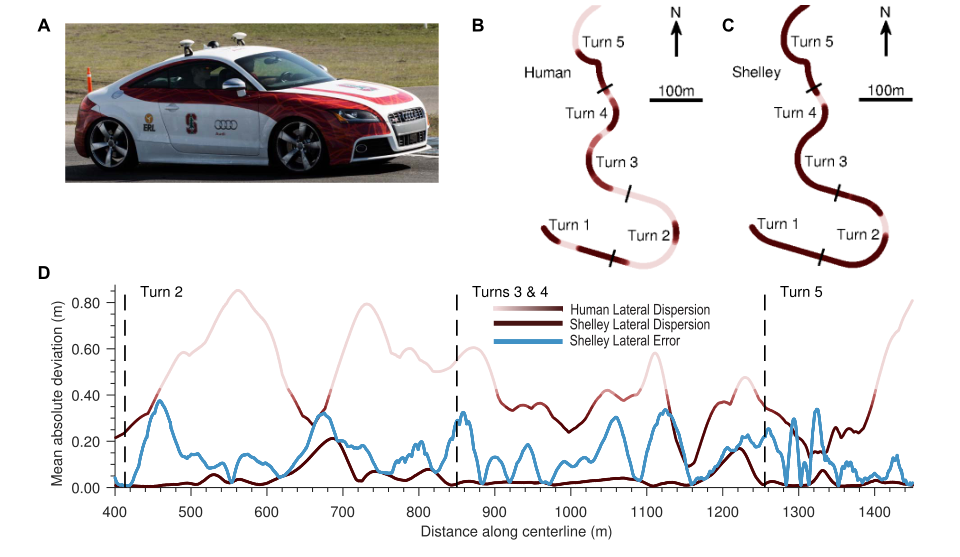

The team had the racer (a “champion amateur race car driver,” as they put it) drive around the Thunderhill Raceway Park in California, then sent Shelley — their modified, self-driving 2009 Audi TTS — around as well, ten times each. And it wasn’t a relaxing Sunday ramble. As the paper reads:

Both the automated vehicle and human participant attempted to complete the course in the minimum amount of time. This consisted of driving at accelerations nearing 0.95g while tracking a minimum time racing trajectory at the the physical limits of tire adhesion. At this combined level of longitudinal and lateral acceleration, the vehicle was able to approach speeds of 95 miles per hour (mph) on portions of the track.

Even under these extreme driving conditions, the controller was able to consistently track the racing line with the mean path tracking error below 40 cm everywhere on the track.

In other words, while pulling a G and hitting 95, the self-driving Audi was never more than a foot and a half off its ideal racing line. The human driver had much wider variation, but this is by no means considered an error — they were changing the line for their own reasons.

“We focused on a segment of the track with a variety of turns that provided the comparison we needed and allowed us to gather more data sets,” wrote Spielberg in an email to TechCrunch. “We have done full lap comparisons and the same trends hold. Shelley has an advantage of consistency while the human drivers have the advantage of changing their line as the car changes, something we are currently implementing.”

Shelley showed far lower variation in its times than the racer, but the racer also posted considerably lower times on several laps. The averages for the segments evaluated were about comparable, with a slight edge going to the human.

Shelley showed far lower variation in its times than the racer, but the racer also posted considerably lower times on several laps. The averages for the segments evaluated were about comparable, with a slight edge going to the human.

This is pretty impressive considering the simplicity of the self-driving model. It had very little real-world knowledge going into its systems, mostly the results of a simulation giving it an approximate idea of how it ought to be handling moment by moment. And its feedback was very limited — it didn’t have access to all the advanced telemetry that self-driving systems often use to flesh out the scene.

The conclusion is that this type of approach, with a relatively simple model controlling the car beyond ordinary handling conditions, is promising. It would need to be tweaked for each surface and setup — obviously a rear-wheel-drive car on a dirt road would be different than front-wheel on tarmac. How best to create and test such models is a matter for future investigation, though the team seemed confident it was a mere engineering challenge.

The experiment was undertaken in order to pursue the still-distant goal of self-driving cars being superior to humans on all driving tasks. The results from these early tests are promising, but there’s still a long way to go before an AV can take on a pro head-to-head. But I look forward to the occasion.

Source of the article – TechCrunch